AI & LLM Deployment at the Edge

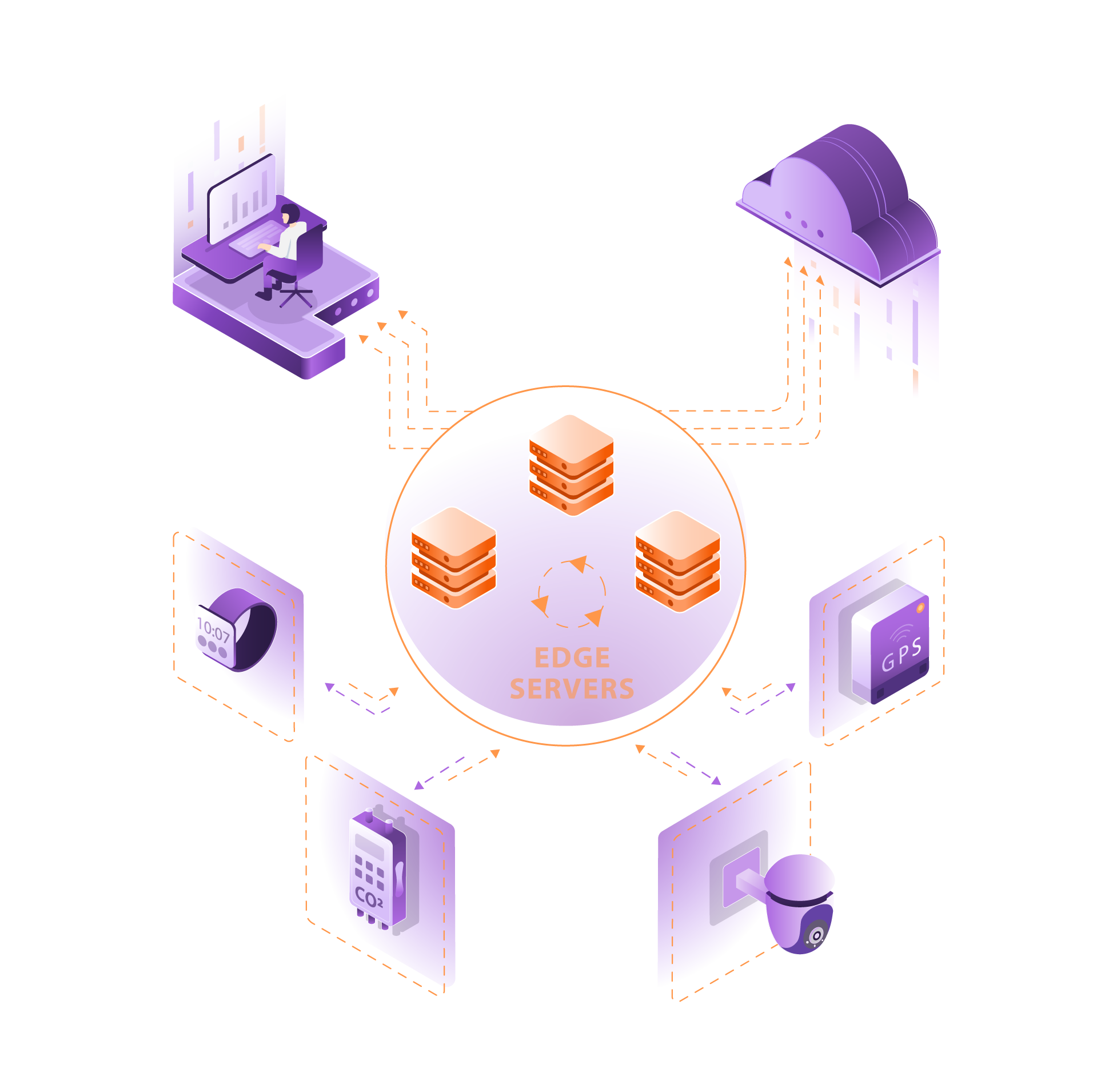

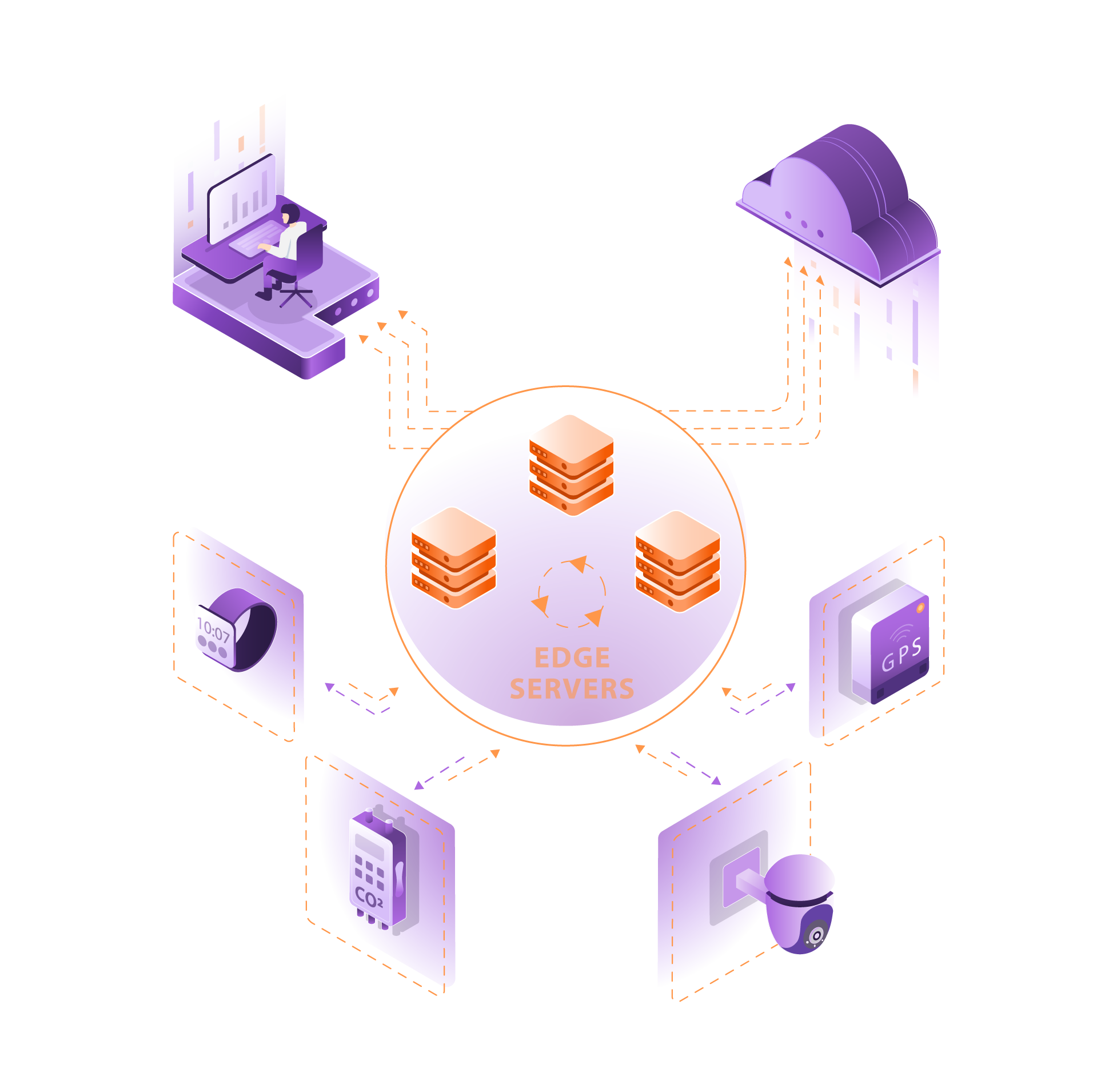

At Edge Solutions Lab (ESL), we deliver end-to-end deployment of AI models and large language models (LLMs) optimized for edge environments — where performance, privacy, and real-time decision-making are critical.

Our team designs the entire deployment stack, from architecture design and model optimization to continuous monitoring and lifecycle management. We build automated pipelines that handle distribution, updates, and performance tracking — even in remote or resource-constrained locations.

Each model is fine-tuned for hardware efficiency, low latency, and resilience, ensuring it can analyze, decide, and act instantly — right where the data is generated. With ESL, your AI and LLM workloads run reliably, securely, and intelligently at the edge.

The Advantages of Application & AI Deployment at the Edge

Technical Advantages

Optimized for Low Latency & High Throughput.

AI/ML Model Orchestration.

Cross-Platform Flexibility.

Application Containerization.

Continuous Optimization.

Reliability & Security Benefits

Secure AI Deployment.

Regulatory Alignment.

Resilient Edge Environments.

Privacy-Preserving AI.

Business & Operational Advantages

Faster Time-to-Insight.

Lower Bandwidth & Cloud Costs.

Scalable AI Workloads.

Lifecycle Model Management.

Seamless Integration.

Flexible Engagement Models.

Ready to implement Software Deployment for Edge Applications?

How it’s made?

Software Deployment for Edge Applications

At Edge Solutions Lab, software deployment is a collaborative process between DevOps engineers and application developers. We build deployment pipelines that are tailored to the constraints and requirements of edge environments — including limited connectivity, remote locations, and hardware variability.

- Containerized applications are packaged using Docker or OCI-compliant runtimes and delivered through lightweight orchestrators like K3s, MicroK8s, or systemd-based init flows.

- Competitive Benchmarking

We study existing market solutions — platforms, frameworks, embedded systems, and edge devices — to see what already exists, who’s doing it well, and where the gaps are. - We implement CI/CD pipelines that support multi-architecture builds, automated testing, and staged rollouts — ensuring safe delivery across a distributed edge fleet.

- All deployments include telemetry hooks, auto-healing mechanisms, and logging agents to enable remote monitoring and troubleshooting.

- OTA updates (over-the-air) are version-controlled, secure, and rollback-capable — designed for both Linux-based and embedded systems.

Our process guarantees that application logic, system services, and orchestration agents are consistently deployed, updated, and monitored — even in offline or constrained conditions.

AI on the Edge: Model Optimization, Not Just Expansion

Rather than simply “pushing cloud models” to edge devices, we specialize in optimizing AI models for local inference. This includes architectural redesign, compression, and runtime tuning to match the constraints of edge hardware (TPUs, NPUs, GPUs, FPGAs).

- Model compression techniques:

pruning, quantization (INT8, FP16), knowledge distillation - Conversion and optimization pipelines:

ONNX, TensorRT, OpenVINO, TFLite, Edge TPU compiler - Runtime adaptation:

memory-efficient batching, edge-oriented pre/post-processing, fused inference pipelines - Hardware benchmarking:

measuring latency, throughput, thermal impact, and power consumption across target edge platforms

We don’t just move models closer to the user — we rebuild them for performance, efficiency, and autonomy in the field.

Ready to explore how to implement Software Deployment for Edge Applications?

Is Application & AI Deployment at the Edge Right for Your Project?

Define Your Performance & AI Requirements

List the essential capabilities your application or AI model must deliver — inference speed, data throughput, connectivity, integration with sensors or devices, and compliance with privacy standards. Consider edge-specific constraints such as power limits, hardware variability, and connectivity interruptions.

Evaluate Existing Cloud-Centric Approaches

Check whether traditional cloud or centralized infrastructure can meet your real-time needs. If network latency, bandwidth costs, or security risks are too high, deploying at the edge may be the better choice.

Analyze Cost, Scale & Lifecycle

Estimate operating costs, data transfer expenses, and model update frequency. Edge deployment often becomes more cost-effective when workloads are high-volume, latency-sensitive, or when regulations require local data processing.

Plan for Scalability & Future Adaptability

Consider whether your deployment should support future AI model updates, modular expansions, or multi-location rollouts. Building adaptability early enables smoother scaling without re-engineering later.

Engage with an Edge AI Deployment Expert

The Edge Solutions Lab team helps you assess feasibility, optimize model performance, design deployment pipelines, and manage ongoing updates — ensuring your applications and AI systems are efficient, secure, and production-ready.

Let’s find out if Edge is the right fit — and what it could mean for your future

The sooner you evaluate your Edge readiness, the faster you can unlock faster response times, smarter automation, and scalable digital operations.

Frequently Asked Questions

What is AI deployment at the edge?

AI deployment at the edge refers to the process of implementing artificial intelligence algorithms and models on edge devices, enabling real-time data processing and decision-making without relying heavily on cloud servers. This approach enhances AI system capabilities by enabling them to operate closer to data sources, such as IoT devices and sensors.

How does edge computing enhance AI capabilities?

Edge computing enhances AI capabilities by facilitating real-time AI processing and reducing latency. By processing data at the network edge, AI systems can deliver faster insights and make immediate decisions. This is especially crucial for applications that require instant responses, such as autonomous vehicles and industrial automation.

What are the primary benefits of deploying AI at the edge?

The primary benefits of deploying AI at the edge include reduced reliance on cloud computing, improved response times, enhanced data privacy, and lower bandwidth costs. Edge AI systems can analyze data locally, minimizing the need to transmit large volumes of information to cloud servers for processing.

What types of devices can utilize edge AI technology?

Various devices, including resource-constrained edge devices such as sensors, cameras, and industrial machines, can leverage edge AI. These devices are equipped with edge AI models that enable on-site AI inference and data processing, resulting in more efficient operations.

What are some applications of edge AI?

Applications of edge AI include real-time monitoring and analytics in smart cities, predictive maintenance in manufacturing, and enhanced user experiences in retail. Examples of edge AI include facial recognition systems, anomaly detection in IoT, and autonomous drones processing data locally.

How does the integration of edge computing and AI improve decision-making?

The integration of edge computing and AI improves decision-making by enabling AI systems to process data in real-time at the edge, allowing for timely and accurate responses to changing conditions. This is particularly beneficial in scenarios where immediate action is necessary, such as in healthcare or emergency response systems.

What future trends are expected in the edge AI market?

Future trends in the edge AI market indicate a growing adoption of edge AI applications across various industries, driven by advancements in AI frameworks and technologies. As the demand for real-time processing and reduced latency increases, more organizations will explore deploying AI at the edge to enhance their operational efficiency and capabilities.

How does the deployment of AI at the edge reduce bandwidth costs?

The deployment of AI at the edge reduces bandwidth costs by minimizing the amount of data that needs to be transmitted to cloud servers for processing. By processing data locally on edge devices, organizations can significantly decrease their data transmission volume, thereby lowering costs associated with data transfer and storage.

What role does AI play in Cloud-to-Edge convergence?

AI models are often trained in the cloud but deployed and refined at the edge. This enables context-aware, real-time intelligence in each environment — from predictive maintenance in factories to smart retail analytics.

How does Edge Solutions Lab help with Cloud-to-Edge convergence?

We provide an end-to-end framework: from feasibility studies, hardware/firmware/software design, and integration, to deployment, AI optimization, validation, and long-term scaling. Our solutions are tailored to complex real-world conditions and mission-critical environments.